Slideshow videos offer a great way of taking individual photos and turning them into more meaningful and complete stories told via video. You can even make the slideshow more engaging by adding text as well as other graphical effects.

This article will show you how to convert images into a video using two different tools: FFmpeg and the Shotstack API. In addition, we’ll also take a look at how you can add an audio track to the video as well as how to add transition effects to the image slides.

Creating a video slideshow of images with FFmpeg

FFmpeg is an open source command line tool that can be used to process video, audio, and other multimedia files and streams. It has a wide range of use cases, some of which include creating videos from images, extracting images from a video, compressing videos, adding text to videos, adding/removing audio from a video, cut segments from a video, etc.

Some operating systems, such as Ubuntu, install FFmpeg by default, so you might find you already have it on your computer. You can check if it’s installed with the following command:

$ ffmpeg -version

If it gives you a version number, then you are good to go, otherwise, you should first head over to the ffmpeg website, download and install a copy for your particular OS.

Create a slideshow video from a sequence of images

To create a video from a sequence of images with FFmpeg, you need to specify the input images and output file. There are several ways you can specify the input images and we’ll look at examples of some of these.

FFmpeg format specifiers

If you have a series of images that are sequentially named, e.g. happy1.jpg, happy2.jpg, happy3.jpg, happy4.jpg, etc. you can use ffmpeg format specifiers to indicate the images that FFmpeg should use:

$ ffmpeg -framerate 1 -i happy%d.jpg -c:v libx264 -r 30 output.mp4

The above command takes an input of images, -i happy%d.jpg. This will search for the image with the lowest digit and sets that as the starting image. It will then increment that number by one and if the image exists, it will be added to the sequence. You can specify the start image yourself with -start_number n, If we added -start_number 3 to the above command, the starting image would be happy3.jpg.

Options are always written before the file they refer to, so in our example, -framerate 1 -i are options used for the input image and -c:v libx264 -r 30 are options for the output file.

We use -framerate 1 to define how fast the pictures are read in, in this case, 1 picture per second. Omitting the framerate will default to a framerate of 25.

-r 30 is the framerate of the output video. Again, if we didn’t define it, it would default to 25.

The -c:v libx264 specifies the codec to use to encode the video. x264 is a library used for encoding video streams into the H.264/MPEG-4 AVC compression format.

You can see the resulting video below:

You can add -vf format=yuv420p or -pix_fmt yuv420p (when your output is H.264) to increase the compatibility of your video with a wide range of players:

$ ffmpeg -framerate 1 -i happy%d.jpg -c:v libx264 -r 30 -pix_fmt yuv420p output.mp4

Using a glob pattern

You can specify the input images to be used in the video with a glob pattern. The pattern *.jpg used below will select all files with names ending in .jpg from the current directory. The matching files will be sorted according to LC_COLLATE. This is useful if you have images named sequentially, but not necessarily in numerical sequential order.

$ ffmpeg -framerate 1 -pattern_type glob -i '*.jpg' -c:v libx264 -r 30 -pix_fmt yuv420p output.mp4

The above will result in a similar video to what we had before.

FFmpeg piping

You can also use cat to pipe to ffmpeg:

$ cat *.jpg | ffmpeg -framerate 1 -f image2pipe -i - -c:v libx264 -r 30 -pix_fmt yuv420p output.mp4

Using the FFmpeg concat demuxer

You can use the concat demuxer to concatenate images listed in a file.

Below, we create a file labelled input.txt and add a list of images for the slideshow. We specify a duration (2 seconds) that each image will be displayed for. You can specify different durations for each image:

file 'happy1.jpg'

duration 2

file 'happy2.jpg'

duration 2

file 'happy3.jpg'

duration 2

file 'happy4.jpg'

duration 2

file 'happy5.jpg'

duration 2

file 'happy6.jpg'

duration 2

We then run the following command to output a video slideshow of the images:

$ ffmpeg -f concat -i input.txt -c:v libx264 -r 30 -pix_fmt yuv420p output.mp4

Adding audio to the video slideshow

You can make your video slideshow more interesting by adding an audio track to it:

$ ffmpeg -framerate 1 -pattern_type glob -i '*.jpg' -i freeflow.mp3 \

-shortest -c:v libx264 -r 30 -pix_fmt yuv420p output6.mp4

The above adds a second input file with -i freeflow.mp3 which is an audio file.

The -shortest option sets the length of the output video to be the shortest length of the two input files. As our audio file is longer than the slideshow of the six images, the length of the output video is the length of the slideshow.

You can see the video with the added audio track below:

Adding transition effects to the video slideshow

You can further improve the video slideshow by adding some effects when transitioning from one image to another.

The following adds a fade in-out effect to the images:

$ ffmpeg \

-loop 1 -t 5 -i happy1.jpg \

-loop 1 -t 5 -i happy2.jpg \

-loop 1 -t 5 -i happy3.jpg \

-loop 1 -t 5 -i happy4.jpg \

-loop 1 -t 5 -i happy5.jpg \

-loop 1 -t 5 -i happy6.jpg \

-i freeflow.mp3 \

-filter_complex \

"[0:v]scale=1280:720:force_original_aspect_ratio=decrease,pad=1280:720:(ow-iw)/2:(oh-ih)/2,setsar=1,fade=t=out:st=4:d=1[v0]; \

[1:v]scale=1280:720:force_original_aspect_ratio=decrease,pad=1280:720:(ow-iw)/2:(oh-ih)/2,setsar=1,fade=t=in:st=0:d=1,fade=t=out:st=4:d=1[v1]; \

[2:v]scale=1280:720:force_original_aspect_ratio=decrease,pad=1280:720:(ow-iw)/2:(oh-ih)/2,setsar=1,fade=t=in:st=0:d=1,fade=t=out:st=4:d=1[v2]; \

[3:v]scale=1280:720:force_original_aspect_ratio=decrease,pad=1280:720:(ow-iw)/2:(oh-ih)/2,setsar=1,fade=t=in:st=0:d=1,fade=t=out:st=4:d=1[v3]; \

[4:v]scale=1280:720:force_original_aspect_ratio=decrease,pad=1280:720:(ow-iw)/2:(oh-ih)/2,setsar=1,fade=t=in:st=0:d=1,fade=t=out:st=4:d=1[v4]; \

[5:v]scale=1280:720:force_original_aspect_ratio=decrease,pad=1280:720:(ow-iw)/2:(oh-ih)/2,setsar=1,fade=t=in:st=0:d=1,fade=t=out:st=4:d=1[v5]; \

[v0][v1][v2][v3][v4][v5]concat=n=6:v=1:a=0,format=yuv420p[v]" -map "[v]" -map 6:a -shortest output7.mp4

The -t option specifies the duration in seconds of each image and -loop 1 loops the image. We add an audio file with -i freeflow.mp3 and then add some configurations for the filter_complex which will add the fade in-out effect.

Some of the images we are using have different sizes, so we use scale with pad to fit the images into a specific size, making them uniform. fade fades the images either in or out, d sets the duration of the fade and st specifies when the fade will start.

The following is the resulting video:

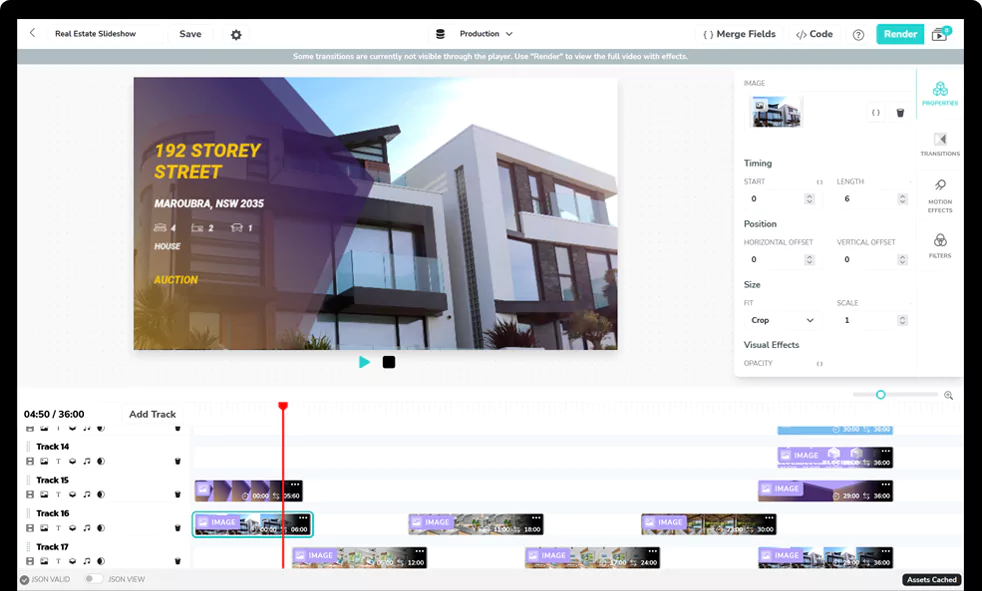

Creating a slideshow video with the Shotstack API

A major advantage that FFmpeg has is its versatility. The official docs peg it as being able to “decode, encode, transcode, mux, demux, stream, filter and play pretty much anything that humans and machines have created.”

A major drawback to it is that it has a steep learning curve, at least if you want to have a level of competence with it that allows you to take full advantage of its features when solving your problems.

We looked at a few examples of creating a simple slideshow and saw the many options you need to be comfortable with to create the slideshow we created.

You can make the process of creating and editing videos quicker and easier by using Shotstack, a video editing API that can be used to automate the process at scale using code.

Let’s see how we can create a video slideshow with code using Shotstack.

Video editing using JSON

An edit in Shotstack is a JSON file describing how assets such as images, videos, audio, font, etc., are arranged to create a video. The edit is what is sent to the Shotstack servers for the video to be rendered. Any asset that you use with Shotstack must be hosted online and publicly available.

The JSON for a simple slideshow video with images, soundtrack, fade transition and motion effects would look like:

{

"timeline": {

"soundtrack": {

"src": "https://s3-ap-southeast-2.amazonaws.com/shotstack-assets/music/freeflow.mp3",

"effect": "fadeOut"

},

"background": "#000000",

"tracks": [

{

"clips": [

{

"asset": {

"type": "image",

"src": "https://shotstack-assets.s3-ap-southeast-2.amazonaws.com/images/happy1.jpg"

},

"start": 0,

"length": 5,

"effect": "zoomIn",

"transition": {

"in": "fade",

"out": "fade"

}

}

]

},

{

"clips": [

{

"asset": {

"type": "image",

"src": "https://shotstack-assets.s3-ap-southeast-2.amazonaws.com/images/happy2.jpg"

},

"start": 4,

"length": 5,

"effect": "slideUp",

"transition": {

"out": "fade"

}

}

]

},

{

"clips": [

{

"asset": {

"type": "image",

"src": "https://shotstack-assets.s3-ap-southeast-2.amazonaws.com/images/happy3.jpg"

},

"start": 8,

"length": 5,

"effect": "slideLeft",

"transition": {

"out": "fade"

}

}

]

},

{

"clips": [

{

"asset": {

"type": "image",

"src": "https://shotstack-assets.s3-ap-southeast-2.amazonaws.com/images/happy4.jpg"

},

"start": 12,

"length": 5,

"effect": "zoomOut",

"transition": {

"out": "fade"

}

}

]

},

{

"clips": [

{

"asset": {

"type": "image",

"src": "https://shotstack-assets.s3-ap-southeast-2.amazonaws.com/images/happy5.jpg"

},

"start": 16,

"length": 5,

"effect": "slideDown",

"transition": {

"out": "fade"

}

}

]

},

{

"clips": [

{

"asset": {

"type": "image",

"src": "https://shotstack-assets.s3-ap-southeast-2.amazonaws.com/images/happy6.jpg"

},

"start": 20,

"length": 5,

"effect": "slideRight",

"transition": {

"out": "fade"

}

}

]

}

]

},

"output": {

"format": "mp4",

"resolution": "sd"

}

}

As you can see, this is much easier to read, understand and make changes to.

When you post the JSON to the API, after a few seconds, the video below is generated:

If you would like to know more about how to edit videos using the Shotstack API you can follow along with the Hello World tutorials for Curl or Postman. There is also a tutorial to create a slideshow video using Node.js.

Conclusion

FFmpeg is a versatile multimedia processing tool that can be used to create and edit a wide range of media, including video. We saw how to use it to create a video from a sequence of images. The problem with using FFmpeg is that it can be difficult to use and it takes some knowledge of the tool and its options to be able to properly use it to get the exact output you want.

We then looked at how to create a video from images with code using the Shotstack API. Shotstack makes the process much easier as you don’t have to know so many options to create a decent looking slideshow. We created a slideshow containing different images, added audio to the video and used different transition effects for the slides.

Shotstack provides the media generation infrastructure that helps you create videos programmatically and at scale. It abstracts a lot of the underlying fine configuration needed to generate creative and engaging videos. By providing a way to create videos in code, you can also build tools, applications and workflows that automate the whole process.

Get started with Shotstack's video editing API in two steps:

- Sign up for free to get your API key.

- Send an API request to create your video:

curl --request POST 'https://api.shotstack.io/v1/render' \ --header 'x-api-key: YOUR_API_KEY' \ --data-raw '{ "timeline": { "tracks": [ { "clips": [ { "asset": { "type": "video", "src": "https://shotstack-assets.s3.amazonaws.com/footage/beach-overhead.mp4" }, "start": 0, "length": "auto" } ] } ] }, "output": { "format": "mp4", "size": { "width": 1280, "height": 720 } } }'

Experience Shotstack for yourself.

- Seamless integration

- Dependable high-volume scaling

- Blazing fast rendering

- Save thousands